StreamGear API: Real-time Frames Mode⚓

Overview⚓

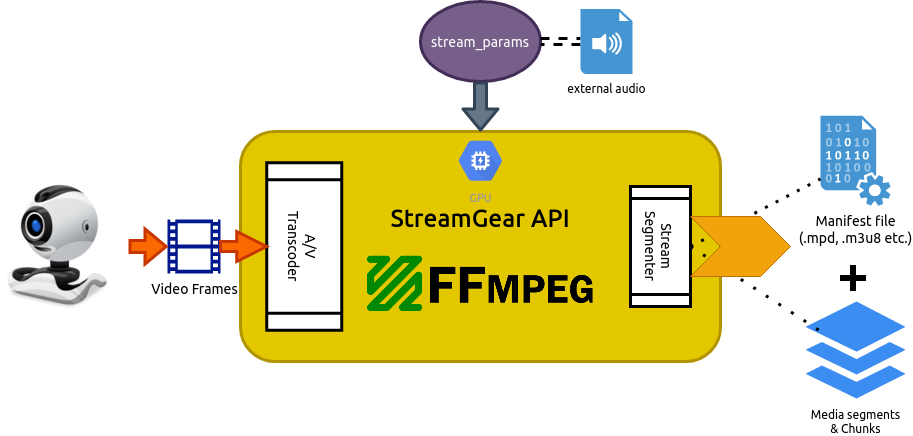

When no valid input is received on -video_source attribute of stream_params dictionary parameter, StreamGear API activates this mode where it directly transcodes real-time numpy.ndarray video-frames (as opposed to a entire video file) into a sequence of multiple smaller chunks/segments for adaptive streaming.

This mode works exceptionally well when you desire to flexibility manipulate or transform video-frames in real-time before sending them onto FFmpeg Pipeline for processing. But on the downside, StreamGear DOES NOT automatically maps video-source's audio to generated streams with this mode. You need to manually assign separate audio-source through -audio attribute of stream_params dictionary parameter.

SteamGear supports both MPEG-DASH (Dynamic Adaptive Streaming over HTTP, ISO/IEC 23009-1) and Apple HLS (HTTP Live Streaming) with this mode.

For this mode, StreamGear API provides exclusive stream() method for directly trancoding video-frames into streamable chunks.

New in v0.2.2

Apple HLS support was added in v0.2.2.

Real-time Frames Mode is NOT Live-Streaming.

Rather, you can easily enable live-streaming in Real-time Frames Mode by using StreamGear API's exclusive -livestream attribute of stream_params dictionary parameter. Checkout its usage example here.

Danger

-

Using

transcode_source()function instead ofstream()in Real-time Frames Mode will instantly result inRuntimeError! -

NEVER assign anything to

-video_sourceattribute ofstream_paramsdictionary parameter, otherwise Single-Source Mode may get activated, and as a result, usingstream()function will throwRuntimeError! -

You MUST use

-input_framerateattribute to set exact value of input framerate when using external audio in this mode, otherwise audio delay will occur in output streams. -

Input framerate defaults to

25.0fps if-input_framerateattribute value not defined.

Usage Examples⚓

After going through StreamGear Usage Examples, Checkout more of its advanced configurations here ➶

Parameters⚓

References⚓

FAQs⚓